Overview

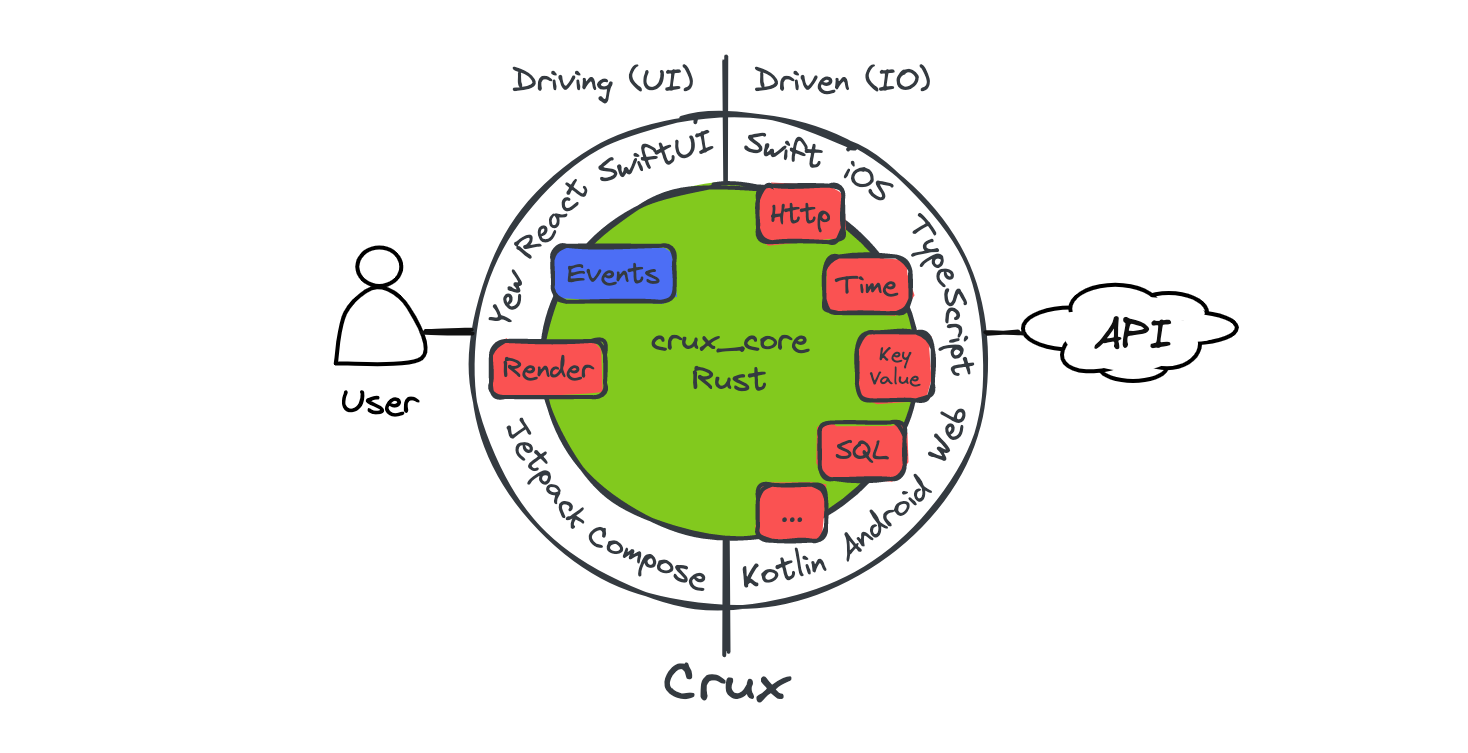

Crux is a framework for building cross-platform applications with better testability, higher code and behavior reuse, better safety, security, and more joy from better tools.

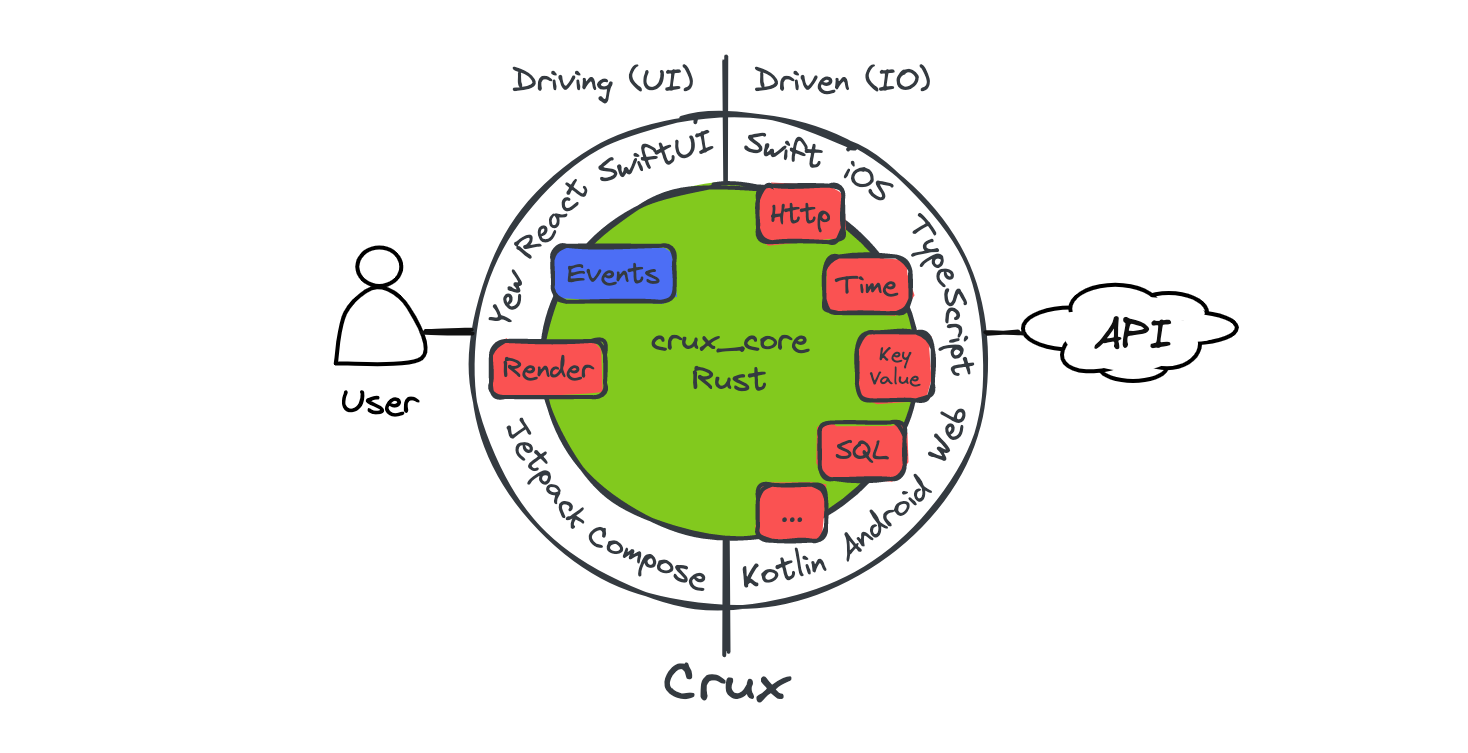

It splits the application into two distinct parts, a Core built in Rust, which drives as much of the business logic as possible, and a Shell, built in the platform native language (Swift, Kotlin, TypeScript), which provides all interfaces with the external world, including the human user, and acts as a platform on which the core runs.

The aim is to separate three kinds of code in a typical app, which have different goals:

- the presentation layer in the user interface,

- the pure logic driving behaviour and state updates in response to the user's actions, and

- the effects (or I/O) layer where network communication, storage, interactions with real-world time, and other similar things are handled

The Core handles the behaviour logic, the Shell handles the presentation layer and effect execution (but not orchestration, that is part of the behaviour and therefore in the Core). This strict separation makes the behaviour logic much easier to test without any of the other layers getting involved.

The interface between the Core and the Shell is a native FFI (Foreign Function Interface) with message passing semantics, where simple data structures are passed across the boundary, supported by cross-language code generation and type checking.

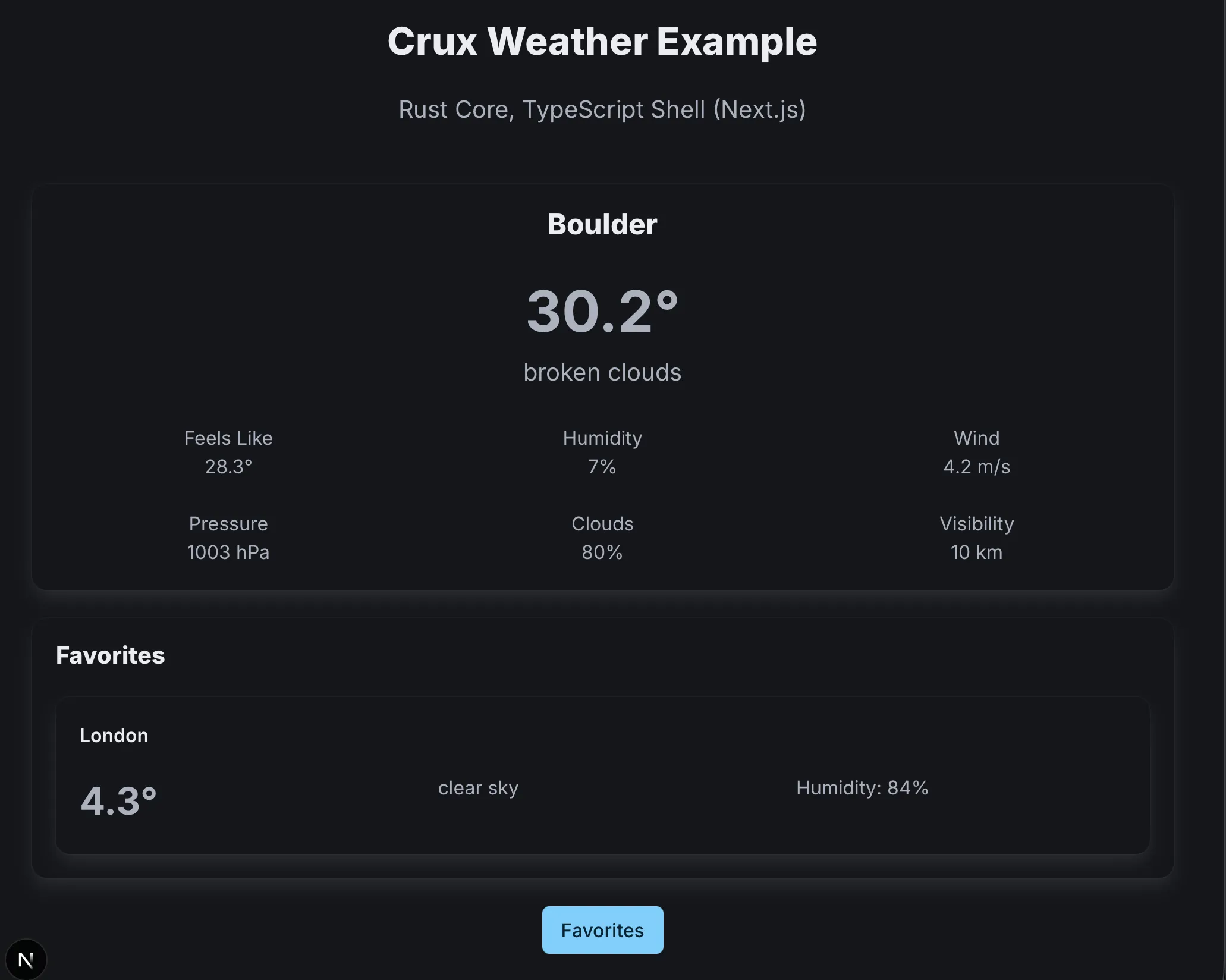

To get playing with Crux quickly, follow Part I of the book, from the Getting Started chapter onward. It will take you from zero to a basic working app on your preferred platform quickly. From there, continue on to Part II – building the Weather App, which builds on the basics and covers the more advanced features and patterns needed in a real world app.

If you just want to understand why we set out to build Crux in the first place and what problems it tries to solve, before you spend any time trying it (no hard feelings, we would too), read our original Motivation.

API docs

There are two places to find API documentation: the latest published version on docs.rs, or the very latest master docs if you too like to live dangerously.

- crux_core - the main Crux crate: latest release | latest master

- crux_http - HTTP client capability: latest release | latest master

- crux_kv - Key-value store capability: latest release | latest master

- crux_time - Time capability: latest release | latest master

You can see the latest version of this book (generated from the master branch) on Github Pages.

Crux is open source on Github. A good way to learn Crux is to explore the code, play with the examples, and raise issues or pull requests. We'd love you to get involved.

You can also join the friendly conversation on our Zulip channel.

Design overview

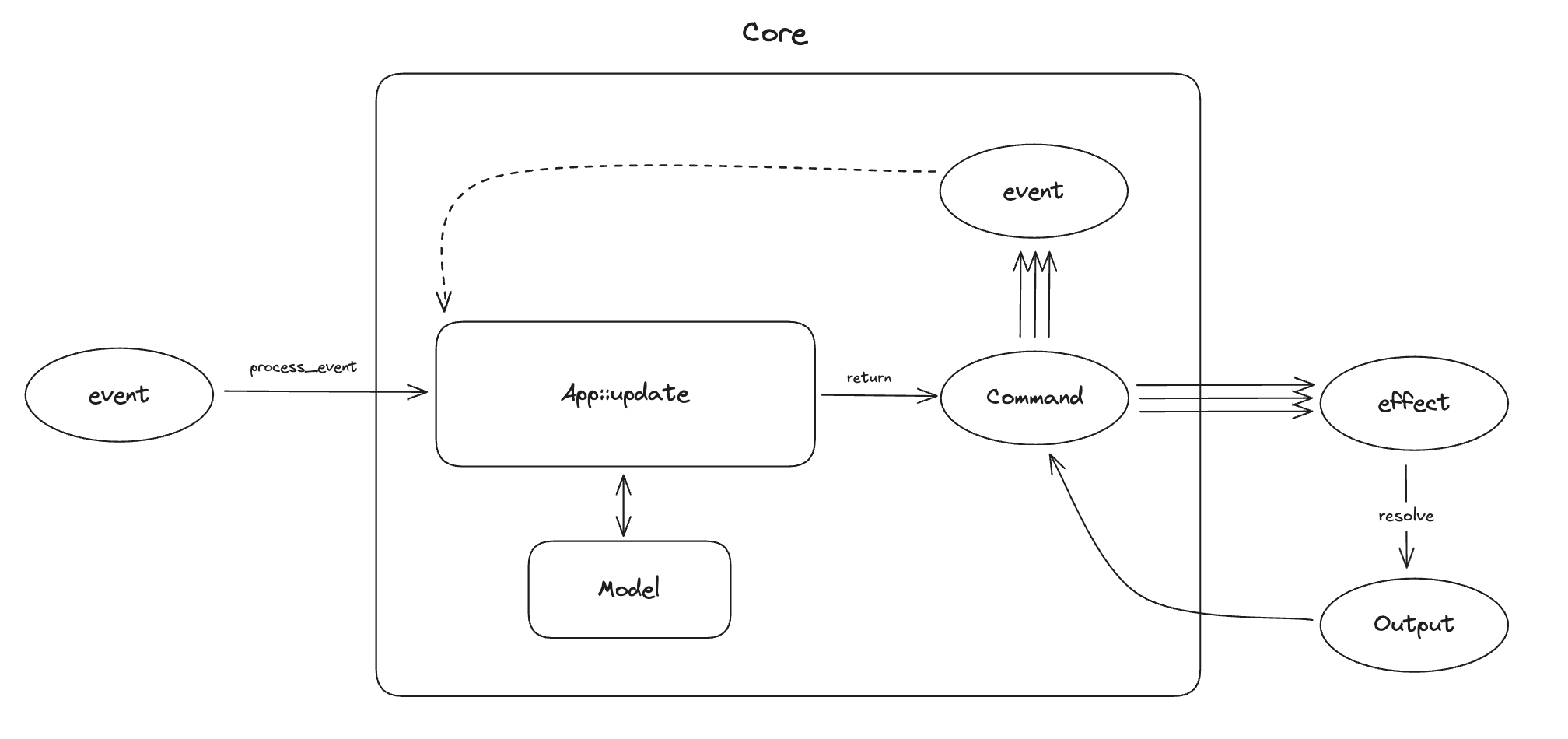

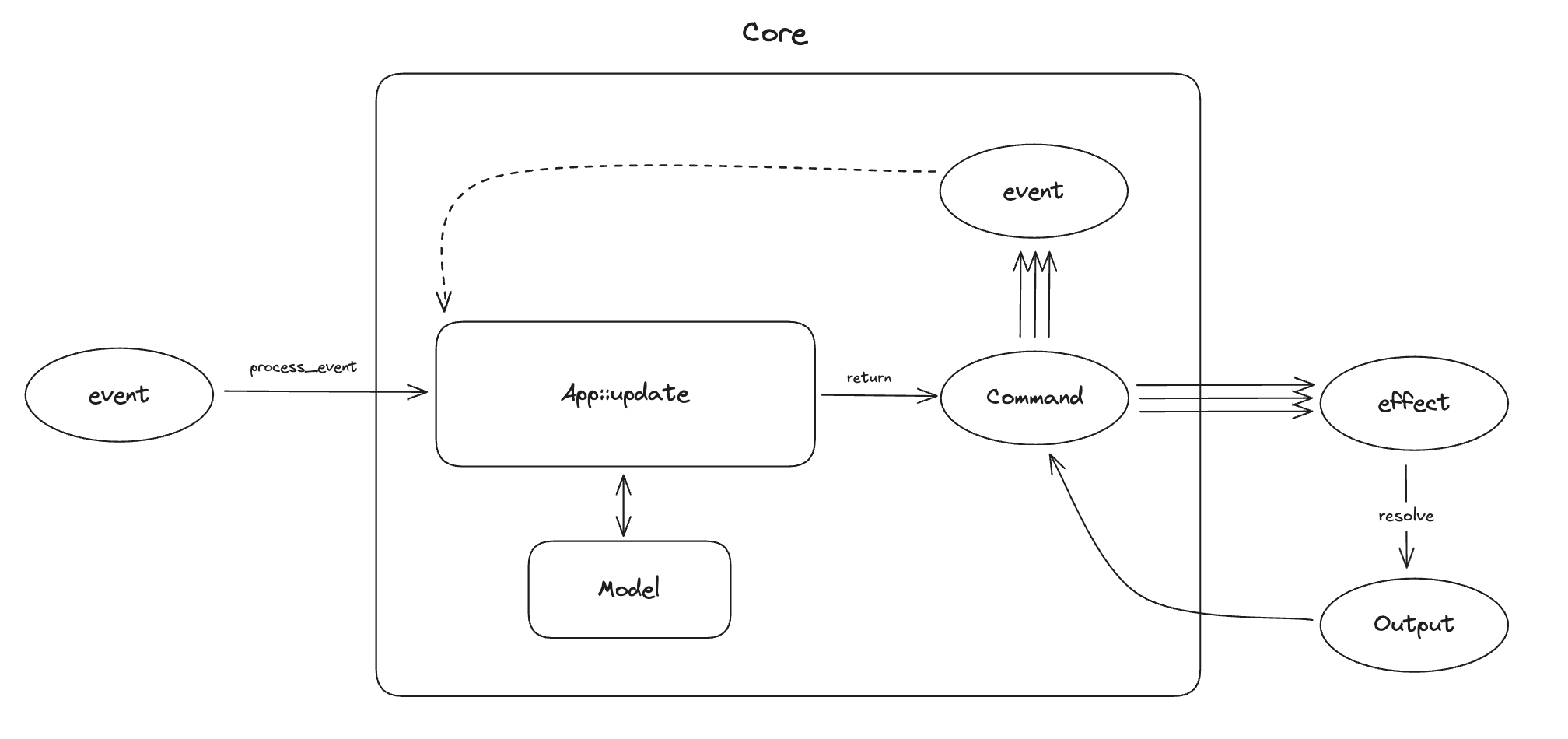

The architecture is event-driven, with state management based on event sourcing, similar to Elm or Redux. The Core holds the majority of state, which is updated in response to events happening in the Shell. The interface between the Core and the Shell is message-based.

Native UI

The user interface layer is built natively, with modern declarative UI frameworks such as Swift UI, Jetpack Compose and React/Svelte or a WASM based framework on the web. The UI layer is as thin as it can be, and all behaviour logic is implemented by the shared Core. The one restriction is that the Core is side–effect free. This is both a technical requirement (to be able to target WebAssembly), and an intentional design goal, to separate behaviour from effects and make them both easier to test in isolation.

Managed effects

Crux uses managed side-effects – the Core requests side-effects from the Shell, which executes them. The basic difference is that instead of doing the asynchronous work, the core describes the intent for the work with data (which also serves as the input for the effect), and passes this to the Shell to be performed. The Shell performs the work, and returns the outcomes back to the Core. This approach using deferred execution is inspired by Elm, and similar to how other purely functional languages deal with effects and I/O (e.g. the IO monad in Haskell). It is also similar in its laziness to how iterators work in Rust.

Type generation

The Core exports types for the messages it can understand. The Shell can call the Core and pass one of the messages. In return, it receives a set of side-effect requests to perform. When the work is completed, the Shell sends the result back into the Core, which responds with further requests if necessary.

Updating the user interface is considered one of the side-effects the Core can request. The entire interface is strongly typed and breaking changes in the core will result in build failures in the Shell.

Goals

We set out to find a better way of building apps across platforms. You can read more about our motivation. The overall goals of Crux are to:

- Build the majority of the application code once, in Rust

- Encapsulate the behavior of the app in the Core for reuse

- Follow the Ports and Adapters pattern, also known as Hexagonal Architecture to facilitate pushing side-effects to the edge, making behavior easy to test

- Strictly separate the behavior from the look and feel and interaction design

- Use the native UI tool kits to create user experience that is the best fit for a given platform

- Use the native I/O libraries to be good citizens of the ecosystem and get the benefit of any OS-provided services

Path to 1.0

Crux is used in production apps today, and we consider it production ready. However, we still have a number of things to work on to call it 1.0, with a stable API and excellent DX expected from a mature framework.

Below is a list of some of the things we know we want to do before 1.0:

- Better code generation with additional features, and support for more languages (e.g. C#, Dart, even C++...) and in turn more Shells (e.g. .NET, Flutter) which will also enable Desktop apps for Windows

- Improved documentation, code examples, and example apps for newcomers

- Improved onboarding experience, with less boilerplate code that end users have to write or copy from an example

Until then, we hope you will work with us on the rough edges, and adapt to the necessary API updates as we evolve. We strive to minimise the impact of changes as much as we can, but before 1.0, some breaking changes will be unavoidable.

Motivation

We set out to prove this approach to building apps largely because we've seen the drawbacks of all the other approaches in real life, and thought "there must be a better way". The two major available approaches to building the same application for iOS and Android are:

- Build a native app for each platform, effectively doing the work twice.

- Use React Native or Flutter to build the application once1 and produce native looking and feeling apps which behave nearly identically.

The drawback of the first approach is doing the work twice. In order to build every feature for iOS and Android at the same time, you need twice the number of people, either people who happily do Swift and Kotlin (and they are very rare), or more likely a set of iOS engineers and another set of Android engineers. This typically leads to forming two separate, platform-focused teams. We have witnessed situations first-hand, where those teams struggle with the same design problems, and despite one encountering and solving the problem first, the other one can learn nothing from their experience (and that's despite long design discussions).

We think such experiences with the platform native approach are common, and the reason why people look to React Native and Flutter.

The issues with the second approach are two-fold:

- Only mostly native user interface

- In the case of React Native, the JavaScript ecosystem tooling disaster

React Native (we'll focus the discussion on it, but most of the below applies to Flutter too) effectively takes over, and works hard to insulate the engineer from the native platform underneath and pretend it doesn't really exist, but of course, inevitably, it does exist and the user interface ends up being built in a combination of 90% JavaScript/TypeScript and 10% Kotlin/Swift. This was a major win when React Native was first introduced, because the platform native UI toolkits were imperative, following a version of MVC architecture, and generally made it quite difficult to get UI state management right. React on the other hand is declarative, leaving much less space for errors stemming from the UI getting into an undefined state (although as apps got more complex and codebases grew, React's state management model got more complex with them). The benefit of declarative UI was clearly recognised by iOS and Android, and both introduced their own declarative UI toolkit - Swift UI and Jetpack Compose. Both of them are quite good, matching that particular advantage of React Native, and leaving only building things once (in theory). But in exchange, they have to be written in JavaScript (and adjacent tools and languages).

Why not build all apps in JavaScript?

The main issue with the JavaScript ecosystem is that it's built on sand. The underlying language is quite loose and has a lot of inconsistencies. It came with no package manager originally, now it has three. To serve code to the browser, it gets bundled, and the list of bundlers is too long to include here, and even 10 years since the introduction of ES modules, the ecosystem is still split and the competing module standards make all tooling more complex and difficult to configure.

JavaScript was built as a dynamic language. This means a lot of basic human errors,

which are made while writing the code are only discovered when running the code.

Static type systems aim to solve that problem and TypeScript

adds this onto JavaScript, but the types only go so far (until they hit an any type,

or dependencies with no type definitions), and they disappear at runtime, so you don't

get a type based conditional (well, kind of).

In short, upgrading JavaScript to something modern, capable of handling a large app codebase with multiple people or even teams working on it is possible, but takes a lot of tooling. Getting all this tooling set up and ready to build things is an all day job, and so more tooling, like Vite has popped up providing this configuration in a box, batteries included. Perhaps the final admission of this problem is the Biome toolchain (formerly the Rome project), attempting to bring all the various tools under one roof (and Biome itself is built in Rust...).

It's no wonder that even a working setup of all the tooling has sharp edges, and cannot afford to be nearly as strict as tooling designed with strictness in mind, such as Rust's. The heart of the problem is that computers are strict and precise instruments, and humans are sloppy creatures. With enough humans (more than 10, being generous) and no additional help, the resulting code will be sloppy, full of unhandled edge cases, undefined behaviour being relied on, circular dependencies preventing testing in isolation, etc. (and yes, these are not hypotheticals).

Contrast that with Rust, which is as strict as it gets, and generally backs up

the claim that if it compiles it will work (and if you struggle to get it past

the compiler, it's probably a bad idea). The tooling and package management is

built in with cargo. There are fewer decisions to make when setting up a Rust

project.

In short, we think the JS ecosystem has jumped the shark, the "complexity toothpaste" is out of the tube, and it's time to stop. But there's no real viable alternative.

Crux is our attempt to provide one.

-

In reality it's more like 1.4x effort build the same app for two platforms. ↩

Getting started

We generally recommend building Crux apps from inside out, starting with the Core.

This part will first take you through setting up the tools and building the Core, and writing tests to make sure everything works as expected. Finally, once we're confident we have a working core, we'll set up the necessary bindings for the shell and build the UI for your chosen platform.

But first, we need to make sure we have all the necessary tools

Install the tools

This is an example of a

rust-toolchain.toml

file, which you can add at the root of your repo. It should ensure that the

correct rust channel and compile targets are installed automatically for you

when you use any rust tooling within the repo.

You may not need all the targets if you're not planning to build a fully cross platform app.

[toolchain]

channel = "stable"

components = ["rustfmt", "rustc-dev"]

targets = [

"aarch64-apple-darwin",

"aarch64-apple-ios",

"aarch64-apple-ios-sim",

"aarch64-linux-android",

"wasm32-unknown-unknown",

"x86_64-apple-ios",

]

profile = "minimal"

For testing, we also recommend to install cargo-nextest, the test runner we'll be using

in the examples.

cargo install cargo-nextest

Create the core crate

We need a crate to hold our application's core, but since one of our shell options later will be rust based, we'll set up a cargo workspace to have some isolation between the core and the other Rust based modules

The workspace and library manifests

First, create a workspace and start with a /Cargo.toml file, at the monorepo

root, to add the new library to our workspace.

It should look something like this:

# /Cargo.toml

[workspace]

resolver = "3"

members = ["shared"]

[workspace.package]

edition = "2024"

rust-version = "1.90"

[workspace.dependencies]

anyhow = "1.0.102"

crux_core = "0.17.0"

serde = "1.0.228"

The shared library

The first library to create is the one that will be shared across all platforms,

containing the behavior of the app. You can call it whatever you like, but we

have chosen the name shared here. You can create the shared rust library, like

this:

cargo new --lib shared

The library's manifest, at /shared/Cargo.toml, should look something like the

following,

# /shared/Cargo.toml

[package]

name = "shared"

version = "0.1.0"

edition.workspace = true

rust-version.workspace = true

[lib]

crate-type = ["cdylib", "lib", "staticlib"]

name = "shared"

[dependencies]

crux_core.workspace = true

serde = { workspace = true, features = ["derive"] }

Note the crate-type in the [lib] section. This is in preparation for linking with the

shells:

libis the default rust library when linking into a rust binarystaticlibis a static library (libshared.a) for use with iOS appscdylibis a C-ABI dynamic library (libshared.so) for use with JNA in an Android app

The basic files

The only missing part now is your src/lib.rs file. This will eventually

contain a fair bit of configuration for the shell interface, so we tend to

recommend reserving it to this job and creating a a src/app.rs module

for your app code.

For now, the lib.rs file looks as follows:

// src/lib.rs

mod app;

pub use app::*;and app.rs can be empty, but let's put our app's main type in it,

call it Counter:

// src/app.rs

#[derive(Default)]

pub struct Counter;Running

cargo build

should build your Core. Let's make it do something now.

A very basic app

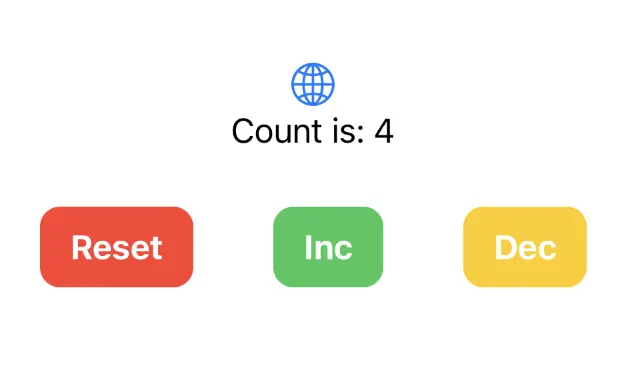

The basic app we'll build as an example to demonstrate the interaction between the Shell and the Core and the state management will be the well known and loved counter app. A simple counter we can increment, decrement and reset.

Code of the app

You can find the full code for this part of the guide here

In the last chapter, we started with the main type

#[derive(Default)]

pub struct Counter;We need to implement Default so that Crux can construct the app for us.

To turn it into a Crux app, we need to implement the App trait from the

crux_core crate.

use crux_core::App;

impl App for Counter {

}If you're following along, the compiler is now screaming at you that you're

missing four associated types for the trait — Event, Model, ViewModel,

and Effect.

Let's add them and talk about them one by one.

Event

Event defines all the possible events the app can respond to. It is essentially the Core's public API.

In our case it will look as follows:

#[derive(Serialize, Deserialize, Clone, Debug)]

pub enum Event {

Increment,

Decrement,

Reset,

}Those are the three things we can do with the counter. None of them need any additional

information, so this simple enum will do. It is serializable, because it will

eventually be crossing the FFI boundary. We will get to that soon.

Model

Model holds our application's internal state. You can probably guess what this will look like:

#[derive(Default)]

pub struct Model {

count: isize,

}It is a simple counter after all. Model stays in the core, so it doesn't need to serialize.

You can derive (or implement) Default and have Crux create an instance of your app and your model for you, or you can explicitly create a core with specified App and Model instances (this may be useful if you need to set up some initial state).

ViewModel

ViewModel represents the user interface at any one point in time. This is our indirection between the internal state and the UI on screen. In the case of the counter, this is pretty academic, there is no practical reason for making them different, but for the sake of the example, let's add some formatting in the mix and make it a string.

#[derive(Serialize, Deserialize, Clone, Default)]

pub struct ViewModel {

pub count: String,

}The difference between Model and ViewModel will get a lot more pronounced once we introduce

some navigation into the mix in Part II.

Effect

For now, the counter has no side effects. Except it wants to update the user interface, and that is also a side effect. We'll go with this:

use crux_core::macros::effect;

use crux_core::render::RenderOperation;

#[effect]

#[derive(Debug)]

pub enum Effect {

Render(RenderOperation),

}We're saying "the only side effect of our behaviour is rendering the user interface".

The Effect type is worth understanding further, but in order to do that we need to

talk about what makes Crux different from most UI frameworks.

Managed side-effects

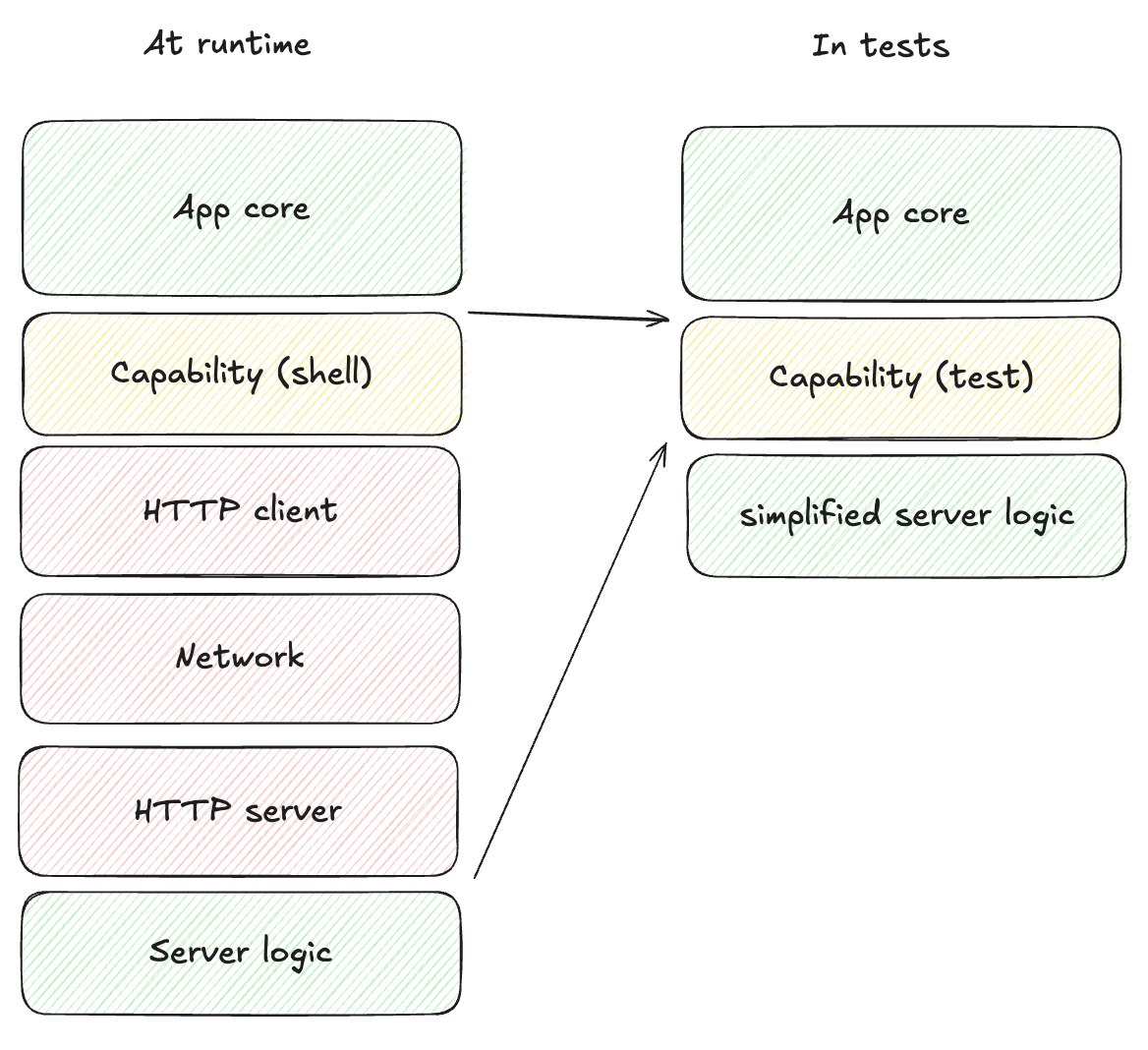

One of the key design choices in Crux is that the Core is free of side-effects (besides its internal state). Your application can never perform anything that directly interacts with the environment around it - no network calls, no reading/writing files, not even updating the screen. Actually doing all those things is the job of the Shell, the core can only ask for them to be done.

This makes the core portable between platforms, and, importantly, very easy to test. It also separates the intent – the "functional" requirements – from the implementation of the side-effects and the "non-functional" requirements (NFRs).

For example, your application knows it wants to store data in a SQL database, but it doesn't need to know or care whether that database is local or remote. That decision can even change as the application evolves, and be different on each platform. We won't go into the detail at this point, because we don't need the full extent of side effects just yet. If you want to know more now, you can jump ahead to the chapter on Managed Effects, but it's probably a bit much at this point. Up to you.

All you need to know for now is that for us to ask the Shell for side effects, it will need to know what side effects it needs to handle, so we will need to list the possible kinds of effects (as an enum). Effects are simply messages describing what should happen. In our case the only option is asking for a UI update (or, more precisely, telling the shell a new view model is available).

That's enough about effects for now, we will spend a lot more time with them later on.

Implementing the App trait

We now have all the building blocks to implement the App trait. Here is

where we end up (straight from the actual example code):

impl App for Counter {

type Event = Event;

type Model = Model;

type ViewModel = ViewModel;

type Effect = Effect;

fn update(&self, event: Event, model: &mut Model) -> Command<Effect, Event> {

match event {

Event::Increment => model.count += 1,

Event::Decrement => model.count -= 1,

Event::Reset => model.count = 0,

}

render()

}

fn view(&self, model: &Model) -> ViewModel {

ViewModel {

count: format!("Count is: {}", model.count),

}

}

}The update function is the heart of the app, it manages the state transitions

of the app. It responds to events by (optionally) updating the state. You

may have noticed the strange return type: Command<Effect, Event>.

This is the request for some side-effects. We seem to be accumulating terminology, so let's do a quick recap:

- Effect - a request for a type of side-effect (e.g. a HTTP request)

- Operation - carried by the Effect, specifies the data for the effect (e.g. the URL, method, headers, body...)

- Command - a bundle of effect requests which execute together, sequentially, in parallel or in a more complex coordination

In real apps, we typically use a few kinds of effects over and over,

and so it's necessary to allow reuse. That's what the Effect enum does, it

bundles together effects of the same type, defined by the same module or crate (We

call those modules Capabilities, but lets not worry about those yet).

The other thing that happens in real apps is mixing different kinds of effects in workflows, chaining them, running them concurrently, even racing them. That's what commands allow you to do.

Our update function looks at the event it got, updates the model.count, and

since the count has changed, the UI needs to update, so it calls render(). The

render() call returns a Command, which update just passes on to the caller.

The view function's job is to return the representation of what we want the Shell to show

on screen. It's up to the Shell to call it when ready. Our view does a bit of string

formatting and wraps it in a ViewModel.

That's a working counter done. It's obviously really basic, but it's enough for us to test it.

Testing the Counter app

In this chapter we'll write some basic tests for our counter app. It is tempting to skip reading this, but please don't. Testing and testability is one of the most important benefits of Crux, and even in this simple case, subtle things are going on, which we'll build on later.

Before writing tests that use Crux's command test helpers, enable the testing

API for tests in shared/Cargo.toml:

[dev-dependencies]

crux_core = { workspace = true, features = ["testing"] }

This enables Crux's test-only command helpers, including the generated

EffectTestExt fluent helpers and the Command assertion helpers we'll use in

tests, without adding them to your production dependency.

The first test

Technically, we've already broken the rules and written code without having a failing test for it. We're going to let that slip in the name of education, but let's fix that before someone alerts the TDD authorities.

The first test we're going to write will check that resetting the count renders the UI.

#[cfg(test)]

mod test {

use super::*;

#[test]

fn renders() {

let app = Counter;

let mut model = Model::default();

// Check update asked us to `Render`, and only that

app.update(Event::Reset, &mut model).expect_only_render();

}

}We create an instance of the app, and an instance of the model. Then we call

update with the Event::Reset event. As you may remember we get back

a Command, which we expect to carry a request for a render operation. The

#[effect] macro on the Effect enum we declared earlier generates chainable

test-helper methods for Command. These methods live on a generated trait

called EffectTestExt, which needs to be in scope. One of them is

expect_only_render, which asserts "the next effect is a Render and there are

no others." It panics if either condition fails. The trait is generated

alongside the Effect declaration when crux_core's testing feature is

enabled, so use super::*; brings it into scope automatically for module tests.

That test should pass (check with cargo nextest run). Next up, we can check

that the view model is rendered correctly

#[test]

fn shows_initial_count() {

let app = Counter;

let model = Model::default();

let actual_view = app.view(&model).count;

let expected_view = "Count is: 0";

assert_eq!(actual_view, expected_view);

}This is a lot more basic, just a simple equality assertion. Let's try something a bit more interesting

#[test]

fn increments_count() {

let app = Counter;

let mut model = Model::default();

// Check update asked us to `Render`, and only that

app.update(Event::Increment, &mut model).expect_only_render();

let actual_view = app.view(&model).count;

let expected_view = "Count is: 1";

assert_eq!(actual_view, expected_view);

}When we send the increment event, we expect to be told to render, and we expect the view to show "Count is: 1".

You could just as well test just the model state, this is really up to you, what is more convenient and whether you prefer your tests to know about how your state works and to what extent.

By now you get the gist, so here's all the tests to satisfy ourselves that the app does in fact work:

#[cfg(test)]

mod test {

use super::*;

#[test]

fn renders() {

let app = Counter;

let mut model = Model::default();

app.update(Event::Reset, &mut model).expect_only_render();

}

#[test]

fn shows_initial_count() {

let app = Counter;

let model = Model::default();

let actual_view = app.view(&model).count;

let expected_view = "Count is: 0";

assert_eq!(actual_view, expected_view);

}

#[test]

fn increments_count() {

let app = Counter;

let mut model = Model::default();

app.update(Event::Increment, &mut model)

.expect_only_render();

let actual_view = app.view(&model).count;

let expected_view = "Count is: 1";

assert_eq!(actual_view, expected_view);

}

#[test]

fn decrements_count() {

let app = Counter;

let mut model = Model::default();

app.update(Event::Decrement, &mut model)

.expect_only_render();

let actual_view = app.view(&model).count;

let expected_view = "Count is: -1";

assert_eq!(actual_view, expected_view);

}

#[test]

fn resets_count() {

let app = Counter;

let mut model = Model::default();

let _ = app.update(Event::Increment, &mut model);

let _ = app.update(Event::Reset, &mut model);

// Was the view updated correctly?

let actual = app.view(&model).count;

let expected = "Count is: 0";

assert_eq!(actual, expected);

}

#[test]

fn counts_up_and_down() {

let app = Counter;

let mut model = Model::default();

let _ = app.update(Event::Increment, &mut model);

let _ = app.update(Event::Reset, &mut model);

let _ = app.update(Event::Decrement, &mut model);

let _ = app.update(Event::Increment, &mut model);

let _ = app.update(Event::Increment, &mut model);

// Was the view updated correctly?

let actual = app.view(&model).count;

let expected = "Count is: 1";

assert_eq!(actual, expected);

}

}You can see that occasionally, we test for the render to be requested. This will be important later, because

we'll be able to not only check for the effects, but also resolve them – provide the value they requested,

for example the response to a HTTP request. The same EffectTestExt extension generates a resolve_<variant>

method per effect variant for that purpose, which we'll meet in Testing with Effects.

That will let us test entire user flows calling web APIs, working with local storage and timers, and anything else, all at the speed of unit test and without ever touching the external world or writing a single fake (and maintaining it later).

For now though, let's actually give this thing some user interface. Time to build a Shell.

Preparing to add the Shell

So far, we've built a basic app in relatively basic Rust. If we now want to expose it to a Shell written in a different language, we'll have to set up the necessary plumbing, starting with the foreign function interface.

The core FFI bindings

From the work so far, you may have noticed the app has a pretty limited API,

basically the update and view methods. There's one more for resolving

effects (called resolve), but that really is it. We need to make those three methods available

to the Shell, but once that's done, we don't have to touch it again.

Let's briefly talk about what we want from this interface. Ideally, in our language of choice we would:

- have a native equivalent of the

update,viewandresolvefunction - have an equivalent for our

Event,EffectandViewModeltypes - not have to worry about what black magic is happening behind the scenes to make that work

Crux provides code generation support for all of the above.

It isn't in any way actual black magic. What happens is Crux exposes FFI calls taking and returning

the values serialized with bincode (by default), and generated "foreign" (Swift, Kotlin, TypeScript, ...)

types handling the foreign side of the serialization.

Yes, this introduces some extra work to the FFI, but generally, for each user interaction we make a relatively small number of round-trips (almost certainly less than ten), and our benchmarks say we can make thousands of them per second. The real throughput is dependent on how much data gets serialized, but it only becomes a problem with really large messages, and advanced workarounds exist. You most likely don't need to worry about it, at least not for now.

Preparing the core

We will prepare the core for both kinds of supported shells - native ones and WebAssembly ones.

To help with the native setup, Crux uses Mozilla's Uniffi to generate the bindings. For WebAssembly, it uses wasm-bingen.

First, lets update our Cargo.toml:

# shared/Cargo.toml

[package]

name = "shared"

version = "0.1.0"

edition.workspace = true

rust-version.workspace = true

[lib]

crate-type = ["cdylib", "lib", "staticlib"]

[[bin]]

name = "codegen"

required-features = ["codegen"]

[features]

facet_typegen = ["crux_core/facet_typegen"]

uniffi = ["dep:uniffi"]

wasm_bindgen = ["dep:wasm-bindgen"]

codegen = [

"crux_core/cli",

"dep:clap",

"dep:log",

"dep:pretty_env_logger",

"uniffi",

"facet_typegen"

]

[dependencies]

facet = "=0.44"

crux_core.workspace = true

serde = { workspace = true, features = ["derive"] }

# optional dependencies

clap = { version = "4.6.1", optional = true, features = ["derive"] }

log = { version = "0.4.29", optional = true }

pretty_env_logger = { version = "0.5.0", optional = true }

uniffi = { version = "=0.29.4", optional = true }

wasm-bindgen = { version = "0.2.118", optional = true }

A lot has changed! The key things we added are:

- a

bintarget calledcodegen, which is how we're going to run all the code generation - feature flags to optionally enable

uniffiandwasm_bindgen, and grouped those undercodegenalongside some dependencies which are optional depending on that feature flag being enabled - dependencies we need for the code generation

And since we've declared the codegen target, we need to add the code for it.

// shared/src/bin/codegen.rs

use std::path::PathBuf;

use clap::{Parser, ValueEnum};

use crux_core::{

cli::{BindgenArgsBuilder, bindgen},

type_generation::facet::{Config, TypeRegistry},

};

use log::info;

use uniffi::deps::anyhow::Result;

use shared::Counter;

#[derive(Copy, Clone, PartialEq, Eq, PartialOrd, Ord, ValueEnum)]

enum Language {

Swift,

Kotlin,

Csharp,

Typescript,

}

#[derive(Parser)]

#[command(version, about, long_about = None)]

struct Args {

#[arg(short, long, value_enum)]

language: Language,

#[arg(short, long)]

output_dir: PathBuf,

}

fn main() -> Result<()> {

pretty_env_logger::init();

let args = Args::parse();

let typegen_app = TypeRegistry::new().register_app::<Counter>()?.build()?;

let name = match args.language {

Language::Swift => "App",

Language::Kotlin => "com.crux.examples.counter",

Language::Csharp => "CounterApp.Shared",

Language::Typescript => "app",

};

let config = Config::builder(name, &args.output_dir).build();

match args.language {

Language::Swift => {

info!("Typegen for Swift");

typegen_app.swift(&config)?;

}

Language::Kotlin => {

info!("Typegen for Kotlin");

typegen_app.kotlin(&config)?;

info!("Bindgen for Kotlin");

let bindgen_args = BindgenArgsBuilder::default()

.crate_name(env!("CARGO_PKG_NAME").to_string())

.kotlin(&args.output_dir)

.build()?;

bindgen(&bindgen_args)?;

}

Language::Csharp => {

info!("Typegen for C#");

typegen_app.csharp(&config)?;

}

Language::Typescript => {

info!("Typegen for TypeScript");

typegen_app.typescript(&config)?;

}

}

Ok(())

}This is essentially boilerplate for a CLI we can use to run the binding generation and type generation. But it's also a place where you can customize how they work if you have some more advanced needs.

It uses the facet based type generation from crux_core to scan the App for types which will cross

the FFI boundary, collect them and then, depending on what language should be generated builds the code

for it and places it into a specified output_dir directory.

We will call this CLI from the shell projects shortly.

Codegen, typegen, bindgen, which is it?

You'll here these terms thrown around here and there in the docs, so it's worth clarifying what we mean

bindgen – "bindings generation" – provides APIs in the foreign language to call the core's Rust FFI APIs.

For most platforms we use UniFFI, except for WebAssembly, where we use wasm_bindgen

typegen – "type generation" – The core's FFI interface operates on bytes, but both Rust and the languages we're targeting are generally strongly typed. To facilitate the serialization / deserialization, we generate type definition reflecting the Rust types from the core in the foreign language (Swift, Kotlin, TypeScript, ...), which all serialize consistently.

codegen – you guessed it, "code generation" – is the two things above combined.

Updating our app.rs

There's a few things we need to do to our app.rs module to support typegen. The first thing we need to do is update the annotation of the Effect type to tell our effect attribute macro that we want to use the Facet-based typegen.

#[derive(Debug)]

#[effect(facet_typegen)] // previously #[effect]

pub enum Effect {

Render(RenderOperation),

}We also need to annotate the other types that cross the FFI boundary with the Facet derive macro. Note that we are using Facet v0.31 (with crux_core v0.17), and so we also need to specify a layout for enums, e.g. repr(C) or repr(u8).

use facet::Facet;

// derive Facet and specify layout

#[derive(Facet, Serialize, Deserialize, Clone, Debug)]

#[repr(C)]

pub enum Event {

Increment,

Decrement,

Reset,

}

// derive Facet

#[derive(Facet, Serialize, Deserialize, Clone, Default)]

pub struct ViewModel {

pub count: String,

}Bindings code

Now we need to add the Rust side of the bindings into our code. Update your lib.rs to look like this:

// shared/src/lib.rs

#![allow(clippy::unsafe_derive_deserialize)]

mod app;

pub mod ffi;

#[cfg(feature = "csharp")]

pub mod ffi_csharp;

pub use app::*;

pub use crux_core::Core;

#[cfg(feature = "uniffi")]

const _: () = assert!(

uniffi::check_compatible_version("0.29.4"),

"please use uniffi v0.29.4"

);

#[cfg(feature = "uniffi")]

uniffi::setup_scaffolding!();This code uses our feature flags to conditionally initialize the UniFFI bindings and check the version in use.

More importantly, it introduced a new ffi.rs module. Let's look at it closer:

// shared/src/ffi.rs

use crux_core::{

Core,

bridge::{Bridge, EffectId},

};

use crate::Counter;

/// The main interface used by the shell

#[cfg_attr(feature = "uniffi", derive(uniffi::Object))]

#[cfg_attr(feature = "wasm_bindgen", wasm_bindgen::prelude::wasm_bindgen)]

pub struct CoreFFI {

core: Bridge<Counter>,

}

impl Default for CoreFFI {

fn default() -> Self {

Self::new()

}

}

#[cfg_attr(feature = "uniffi", uniffi::export)]

#[cfg_attr(feature = "wasm_bindgen", wasm_bindgen::prelude::wasm_bindgen)]

impl CoreFFI {

#[cfg_attr(feature = "uniffi", uniffi::constructor)]

#[cfg_attr(

feature = "wasm_bindgen",

wasm_bindgen::prelude::wasm_bindgen(constructor)

)]

#[must_use]

pub fn new() -> Self {

Self {

core: Bridge::new(Core::new()),

}

}

/// Send an event to the app and return the effects.

/// # Panics

/// If the event cannot be deserialized.

/// In production you should handle the error properly.

#[must_use]

pub fn update(&self, data: &[u8]) -> Vec<u8> {

let mut effects = Vec::new();

match self.core.update(data, &mut effects) {

Ok(()) => effects,

Err(e) => panic!("{e}"),

}

}

/// Resolve an effect and return the effects.

/// # Panics

/// If the `data` cannot be deserialized into an effect or the `effect_id` is invalid.

/// In production you should handle the error properly.

#[must_use]

pub fn resolve(&self, id: u32, data: &[u8]) -> Vec<u8> {

let mut effects = Vec::new();

match self.core.resolve(EffectId(id), data, &mut effects) {

Ok(()) => effects,

Err(e) => panic!("{e}"),

}

}

/// Get the current `ViewModel`.

/// # Panics

/// If the view cannot be serialized.

/// In production you should handle the error properly.

#[must_use]

pub fn view(&self) -> Vec<u8> {

let mut view_model = Vec::new();

match self.core.view(&mut view_model) {

Ok(()) => view_model,

Err(e) => panic!("{e}"),

}

}

}Broad strokes: we define a type for core with FFI, which holds a Bridge wrapping our Counter, and

provide implementations of the three API methods taking and returning byte buffers.

The translation between rust types and the byte buffers is the job of the bridge (it also holds the effect requests inside the core under an id, which can be sent out to the Shell and used to resolve the effect, but more on that later).

Notice the Shell is in charge of creating the instance of this type, so in theory your Shell can have several instances of the app if it wants to.

There are many attribute macros annotating the FFI type for uniffi and wasm_bindgen, which generate

the actual code making them available as FFIs. We recommend the respective documentation if you're

interested in the detail of how this works. The notable part is that both libraries have a level of support for

various basic and structured data types which we don't use, and instead we serialize the data with Serde,

and generate types with facet_generate to make the support consistent.

It's not essential for you to understand the detail of the above code now. You won't need to change it, unless you're doing something fairly advanced, by which time you'll understand it.

Platform native part

Okay, with that plumbing, the Core part of adding a shell is complete. It's not a one liner, but you will only set this up once, and most likely won't touch it again, but having the ability, should you need to, is important.

Now we can proceed to the actual shell for your platform of choice:

- iOS with Swift and SwiftUI

- Android with Kotlin and Jetpack Compose

- Web with TypeScript, React and Next.js

- Rust in WebAssembly with Leptos

iOS/macOS with SwiftUI

In this section, we'll set up Xcode to build and run the simple counter app we built so far, targeting both iOS and macOS from a single project.

We think that using XcodeGen may be the simplest way to create an Xcode project to build and run a simple Apple app that calls into a shared core.

If you'd rather set up Xcode manually, you can do that, but most of this section will still apply. You just need to add the Swift package dependencies into your project by hand.

When we use Crux to build Apple apps, the Core API bindings are generated in Swift (with C headers) using Mozilla's UniFFI.

The shared core, which we built in previous chapters, is compiled to a static library and linked into the app binary.

The shared types are generated by Crux as a Swift package, which we can add to our project as a dependency. The Swift code to serialize and deserialize these types across the boundary is also generated by Crux as Swift packages.

Compile our Rust shared library

When we build our app, we also want to build the Rust core as a static library so that it can be linked into the binary that we're going to ship.

Other than Xcode and the Apple developer tools, we will use

cargo-swift to generate a

Swift package for our shared library, which we can add in Xcode.

To match our current version of UniFFI, we need to install version 0.9 of cargo-swift. You can install it with

cargo install cargo-swift --version '=0.9'

To run the various steps, we'll also use the Just task runner.

cargo install just

Let's write the Justfile and we can look at what happens. Here are the key tasks (the full Justfile also includes linting, CI and cleanup targets):

# /apple/Justfile

# generates Swift types via codegen binary

typegen:

cargo run --package shared --bin codegen \

--features codegen,facet_typegen \

-- --language swift --output-dir generated

# builds the shared library as a Swift package using cargo-swift

package:

cargo swift package \

--name Shared \

--platforms ios macos \

--lib-type static \

--features uniffi

# rebuilds the Xcode project from project.yml

generate-project:

xcodegen

# generates types, builds shared package, and regenerates Xcode project

generate: typegen package generate-project

# builds the project (generates first)

build: generate

xcodebuild \

-project CounterApp.xcodeproj \

-scheme CounterApp-macOS \

-configuration Debug \

build

# local development workflow

dev: build

The main task is dev which we'll use shortly. It runs build,

which in turn runs typegen, package and generate-project.

typegen will use the codegen CLI we

prepared earlier, and package will use

cargo swift to create a Shared package with our app binary and

the bindgen code. That package will be our Swift interface to the

core.

Finally generate-project will run xcodegen to give us an Xcode

project file. They are famously fragile files and difficult to

version control, so generating it from a less arcane source of truth

seems like a good idea (yes, even if that source of truth is YAML).

Here's the project file:

# /apple/project.yml

name: CounterApp

packages:

Shared:

path: ./generated/Shared

App:

path: ./generated/App

options:

bundleIdPrefix: com.crux.examples.counter

attributes:

BuildIndependentTargetsInParallel: true

targetTemplates:

app:

type: application

sources:

- path: CounterApp

excludes:

- "Info-*.plist"

scheme:

management:

shared: true

dependencies:

- package: Shared

- package: App

targets:

CounterApp-iOS:

templates: [app]

platform: iOS

deploymentTarget: 18.0

info:

path: CounterApp/Info-iOS.plist

properties:

UISupportedInterfaceOrientations:

- UIInterfaceOrientationPortrait

- UIInterfaceOrientationLandscapeLeft

- UIInterfaceOrientationLandscapeRight

UILaunchScreen: {}

CounterApp-macOS:

templates: [app]

platform: macOS

deploymentTarget: "15.0"

info:

path: CounterApp/Info-macOS.plist

properties:

NSSupportsAutomaticGraphicsSwitching: true

settings:

OTHER_LDFLAGS: [-w]

ENABLE_USER_SCRIPT_SANDBOXING: NO

Nothing too special, other than linking a couple packages and using them as dependencies.

With that, you can run

just dev

Simple - just dev! So what exactly happened?

The core built, including the FFI and the extra CLI binary, which was then called

to generate Swift code, and that was then packaged as a Swift package. You can

look at the generated directory, and you'll see two Swift packages - Shared and App,

just like we asked in project.yml. The Shared package has our app as a static lib and all the

generated FFI code for our FFI bindings, and the App package has the key types we will need.

No need to spend much time in here, but this is all the low-level glue code sorted out. Now we need to actually build some UI and we can run our app.

Building the UI

To add some UI, we need to do three things: wrap the core with a simple Swift interface, build a basic View to give us something to put on screen, and use that view as our main app view.

Wrap the core

The generated code still works with byte buffers, so lets give ourselves a nicer interface for it:

// apple/CounterApp/core.swift

import App

import Foundation

import Shared

@MainActor

class Core: ObservableObject {

@Published var view: ViewModel

private var core: CoreFfi

init() {

self.core = CoreFfi()

// swiftlint:disable:next force_try

self.view = try! .bincodeDeserialize(input: [UInt8](core.view()))

}

func update(_ event: Event) {

// swiftlint:disable:next force_try

let effects = [UInt8](core.update(data: Data(try! event.bincodeSerialize())))

// swiftlint:disable:next force_try

let requests = try! Requests.bincodeDeserialize(input: effects).value

for request in requests {

processEffect(request)

}

}

func processEffect(_ request: Request) {

switch request.effect {

case .render:

DispatchQueue.main.async {

// swiftlint:disable:next force_try

self.view = try! .bincodeDeserialize(input: [UInt8](self.core.view()))

}

}

}

}

This is mostly just serialization code. But the processEffect method is interesting.

That is where effect execution goes. At the moment the switch statement has a single

lonely case updating the view model whenever the .render variant is requested,

but you can add more in here later, as you expand your Effect type.

Build a basic view

Xcode should've generated a ContentView file for you in apple/CounterApp/ContentView.swift.

Change it to look like this:

import App

import SwiftUI

struct ContentView: View {

@ObservedObject var core: Core

var body: some View {

VStack {

Image(systemName: "globe")

.imageScale(.large)

.foregroundColor(.accentColor)

Text(core.view.count)

HStack {

ActionButton(label: "Reset", color: .red) {

core.update(.reset)

}

ActionButton(label: "Inc", color: .green) {

core.update(.increment)

}

ActionButton(label: "Dec", color: .yellow) {

core.update(.decrement)

}

}

}

}

}

struct ActionButton: View {

var label: String

var color: Color

var action: () -> Void

init(label: String, color: Color, action: @escaping () -> Void) {

self.label = label

self.color = color

self.action = action

}

var body: some View {

Button(action: action) {

Text(label)

.fontWeight(.bold)

.font(.body)

.padding(EdgeInsets(top: 10, leading: 15, bottom: 10, trailing: 15))

.background(color)

.cornerRadius(10)

.foregroundColor(.white)

.padding()

}

}

}

#Preview {

ContentView(core: Core())

}

And finally, make sure apple/CounterApp/CounterApp.swift looks like this to use

the ContentView:

import SwiftUI

@main

struct CounterApp: App {

var body: some Scene {

WindowGroup {

ContentView(core: Core())

}

}

}

The one interesting part of this is the @ObservedObject var core: Core. Since the Core is

an ObservableObject, we can subscribe to it to refresh our view. And we've marked the view

property as @Published, so whenever we set it, the View will draw.

The view then simply shows the core.view.count in a Text and whenever we press a button, we directly

call core.update() with the appropriate action.

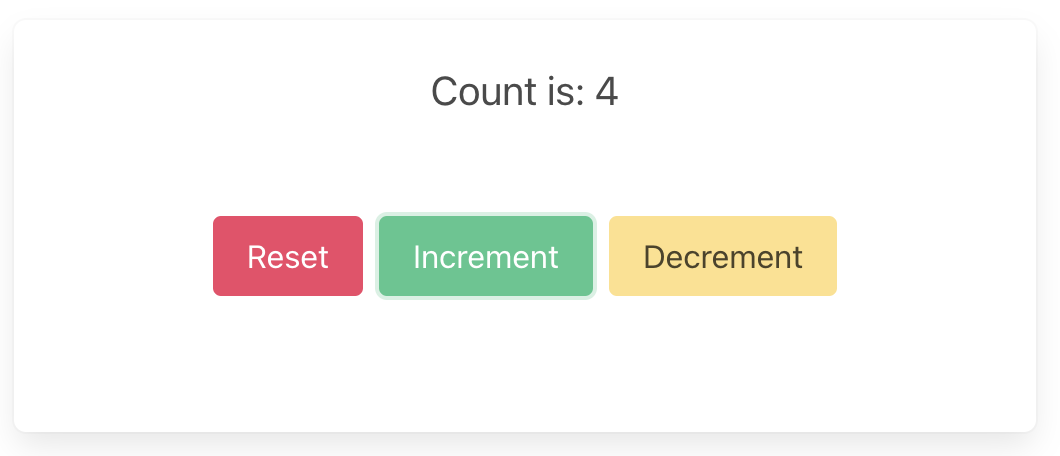

You should then be able to run the app in the simulator, on an iPhone, or as a macOS app, and it should look like this:

Android — Kotlin and Jetpack Compose

This section has not been fully updated to match the rest of the documentation and some parts may not match how Crux works any more.

Bear with us while we update — use the iOS/macOS section as the most up-to-date template to follow.

When we use Crux to build Android apps, the Core API bindings are generated in Kotlin using Mozilla's UniFFI.

The shared core (that contains our app's behaviour) is compiled to a dynamic library, using Mozilla's Rust gradle plugin for Android and the Android NDK. The library is loaded at runtime using Java Native Access.

The shared types are generated by Crux as Kotlin packages, which we

can add to our Android project using sourceSets. The Kotlin code

to serialise and deserialise these types across the boundary is also

generated by Crux.

These are the steps to set up Android Studio to build and run a simple Android app that calls into a shared core.

We want to make setting up Android Studio to work with Crux really easy. As time progresses we will try to simplify and automate as much as possible, but at the moment there is some manual configuration to do. This only needs doing once, so we hope it's not too much trouble. If you know of any better ways than those we describe below, please either raise an issue (or a PR) at https://github.com/redbadger/crux.

This walkthrough uses Mozilla's excellent Rust gradle plugin

for Android, which uses Python. However, pipes has recently been removed from Python (since Python 3.13)

so you may encounter an error linking your shared library.

If you hit this problem, you can either:

- use an older Python (<3.13)

- wait for a fix (see this issue)

- or use a different plugin — there is a PR in the Crux repo that

explores the use of

cargo-ndkand thecargo-ndk-androidplugin that may be useful.

Create an Android App

The first thing we need to do is create a new Android app in Android Studio.

Open Android Studio and create a new project, for "Phone and Tablet", of type "Empty Activity". In this walk-through, we'll call it "SimpleCounter"

- "Name":

SimpleCounter - "Package name":

com.example.counter - "Save Location": a directory called

Androidat the root of our monorepo - "Minimum SDK"

API 34 - "Build configuration language":

Kotlin DSL (build.gradle.kts)

Your repo's directory structure might now look something like this (some files elided):

.

├── Android

│ ├── app

│ │ ├── build.gradle.kts

│ │ └── src

│ │ └── main

│ │ ├── AndroidManifest.xml

│ │ └── java/com/crux/examples/counter

│ │ ├── Core.kt

│ │ └── MainActivity.kt

│ ├── build.gradle.kts

│ ├── gradle.properties

│ ├── settings.gradle.kts

│ └── shared

│ └── build.gradle.kts

├── Cargo.lock

├── Cargo.toml

└── shared

├── Cargo.toml

├── uniffi.toml

└── src

├── app.rs

├── bin

│ └── codegen.rs

├── ffi.rs

└── lib.rs

Add a Kotlin Android Library

This shared Android library (aar) is going to wrap our shared Rust library.

Under File -> New -> New Module, choose "Android Library" and give it the "Module name"

shared. Set the "Package name" to match the one from your

/shared/uniffi.toml, which in this example is com.example.counter.shared.

Again, set the "Build configuration language" to Kotlin DSL (build.gradle.kts).

For more information on how to add an Android library see https://developer.android.com/studio/projects/android-library.

We can now add this library as a dependency of our app.

Edit the app's build.gradle.kts (/Android/app/build.gradle.kts) to look like

this:

import org.jetbrains.kotlin.gradle.dsl.JvmTarget

plugins {

alias(libs.plugins.android.application)

alias(libs.plugins.kotlin.android)

alias(libs.plugins.kotlin.compose)

}

android {

namespace = "com.crux.examples.counter"

compileSdk {

version = release(36)

}

defaultConfig {

applicationId = "com.crux.examples.counter"

minSdk = 34

targetSdk = 36

versionCode = 1

versionName = "1.0"

testInstrumentationRunner = "androidx.test.runner.AndroidJUnitRunner"

}

buildTypes {

release {

isMinifyEnabled = false

proguardFiles(

getDefaultProguardFile("proguard-android-optimize.txt"),

"proguard-rules.pro"

)

}

}

compileOptions {

sourceCompatibility = JavaVersion.VERSION_11

targetCompatibility = JavaVersion.VERSION_11

}

kotlin {

compilerOptions {

jvmTarget = JvmTarget.JVM_11

}

}

buildFeatures {

compose = true

}

}

dependencies {

// our shared library

implementation(project(":shared"))

// added dependencies

implementation(libs.lifecycle.viewmodel.compose)

// original dependencies

implementation(libs.androidx.core.ktx)

implementation(libs.androidx.lifecycle.runtime.ktx)

implementation(libs.androidx.activity.compose)

implementation(platform(libs.androidx.compose.bom))

implementation(libs.androidx.compose.ui)

implementation(libs.androidx.compose.ui.graphics)

implementation(libs.androidx.compose.ui.tooling.preview)

implementation(libs.androidx.compose.material3)

testImplementation(libs.junit)

androidTestImplementation(libs.androidx.junit)

androidTestImplementation(libs.androidx.espresso.core)

androidTestImplementation(platform(libs.androidx.compose.bom))

androidTestImplementation(libs.androidx.compose.ui.test.junit4)

debugImplementation(libs.androidx.compose.ui.tooling)

debugImplementation(libs.androidx.compose.ui.test.manifest)

}

In our Gradle files, we are referencing a "Version Catalog" to manage our dependency versions, so you will need to ensure this is kept up to date.

Our catalog (Android/gradle/libs.versions.toml) will end up looking like this:

[versions]

agp = "8.13.2"

kotlin = "2.3.0"

coreKtx = "1.17.0"

junit = "4.13.2"

junitVersion = "1.3.0"

espressoCore = "3.7.0"

lifecycleRuntimeKtx = "2.10.0"

activityCompose = "1.12.3"

composeBom = "2026.01.01"

jna = "5.18.1"

lifecycle = "2.10.0"

rustAndroid = "0.9.6"

[libraries]

androidx-core-ktx = { group = "androidx.core", name = "core-ktx", version.ref = "coreKtx" }

junit = { group = "junit", name = "junit", version.ref = "junit" }

androidx-junit = { group = "androidx.test.ext", name = "junit", version.ref = "junitVersion" }

androidx-espresso-core = { group = "androidx.test.espresso", name = "espresso-core", version.ref = "espressoCore" }

androidx-lifecycle-runtime-ktx = { group = "androidx.lifecycle", name = "lifecycle-runtime-ktx", version.ref = "lifecycleRuntimeKtx" }

androidx-activity-compose = { group = "androidx.activity", name = "activity-compose", version.ref = "activityCompose" }

androidx-compose-bom = { group = "androidx.compose", name = "compose-bom", version.ref = "composeBom" }

androidx-compose-ui = { group = "androidx.compose.ui", name = "ui" }

androidx-compose-ui-graphics = { group = "androidx.compose.ui", name = "ui-graphics" }

androidx-compose-ui-tooling = { group = "androidx.compose.ui", name = "ui-tooling" }

androidx-compose-ui-tooling-preview = { group = "androidx.compose.ui", name = "ui-tooling-preview" }

androidx-compose-ui-test-manifest = { group = "androidx.compose.ui", name = "ui-test-manifest" }

androidx-compose-ui-test-junit4 = { group = "androidx.compose.ui", name = "ui-test-junit4" }

androidx-compose-material3 = { group = "androidx.compose.material3", name = "material3" }

jna = { module = "net.java.dev.jna:jna", version.ref = "jna" }

lifecycle-viewmodel-compose = { module = "androidx.lifecycle:lifecycle-viewmodel-compose", version.ref = "lifecycle" }

[plugins]

android-application = { id = "com.android.application", version.ref = "agp" }

kotlin-android = { id = "org.jetbrains.kotlin.android", version.ref = "kotlin" }

kotlin-compose = { id = "org.jetbrains.kotlin.plugin.compose", version.ref = "kotlin" }

android-library = { id = "com.android.library", version.ref = "agp" }

rust-android = { id = "org.mozilla.rust-android-gradle.rust-android", version.ref = "rustAndroid" }

The Rust shared library

We'll use the following tools to incorporate our Rust shared library into the Android library added above. This includes compiling and linking the Rust dynamic library and generating the runtime bindings and the shared types.

- The Android NDK

- Mozilla's Rust gradle plugin

for Android

- This plugin depends on Python 3, make sure you have a version installed

- Java Native Access

- Uniffi to generate Java bindings

The NDK can be installed from "Tools, SDK Manager, SDK Tools" in Android Studio.

Let's get started.

Add the four rust android toolchains to your system:

$ rustup target add aarch64-linux-android armv7-linux-androideabi i686-linux-android x86_64-linux-android

Edit the project's build.gradle.kts (/Android/build.gradle.kts) to look like

this:

// Top-level build file where you can add configuration options common to all sub-projects/modules.

plugins {

alias(libs.plugins.android.application) apply false

alias(libs.plugins.kotlin.android) apply false

alias(libs.plugins.kotlin.compose) apply false

alias(libs.plugins.android.library) apply false

alias(libs.plugins.rust.android) apply false

}

Edit the library's build.gradle.kts (/Android/shared/build.gradle.kts) to look

like this:

import com.android.build.gradle.tasks.MergeSourceSetFolders

import com.nishtahir.CargoBuildTask

import com.nishtahir.CargoExtension

import org.jetbrains.kotlin.gradle.dsl.JvmTarget

plugins {

alias(libs.plugins.android.library)

alias(libs.plugins.kotlin.android)

alias(libs.plugins.rust.android)

}

android {

namespace = "com.crux.examples.counter"

compileSdk {

version = release(36)

}

ndkVersion = "29.0.14206865"

defaultConfig {

minSdk = 34

}

compileOptions {

sourceCompatibility = JavaVersion.VERSION_11

targetCompatibility = JavaVersion.VERSION_11

}

kotlin {

compilerOptions {

jvmTarget = JvmTarget.JVM_11

}

}

sourceSets {

getByName("main") {

// types are now generated in kotlin

kotlin.srcDirs("${projectDir}/../generated")

}

}

}

dependencies {

implementation(libs.jna) {

artifact {

type = "aar"

}

}

}

extensions.configure<CargoExtension>("cargo") {

// workspace, so build at root, with `--package shared`

module = "../.."

libname = "shared"

profile = "debug"

// these are the four recommended targets for Android that will ensure your library works on all mainline android devices

// make sure you have included the rust toolchain for each of these targets: \

// `rustup target add aarch64-linux-android armv7-linux-androideabi i686-linux-android x86_64-linux-android`

targets = listOf("arm", "arm64", "x86", "x86_64")

extraCargoBuildArguments = listOf("--package", "shared", "--features", "uniffi")

cargoCommand = System.getProperty("user.home") + "/.cargo/bin/cargo"

rustcCommand = System.getProperty("user.home") + "/.cargo/bin/rustc"

pythonCommand = "python3"

}

afterEvaluate {

// The `cargoBuild` task isn't available until after evaluation.

android.libraryVariants.configureEach {

var productFlavor = ""

productFlavors.forEach { flavor ->

productFlavor += flavor.name.replaceFirstChar { char -> char.uppercaseChar() }

}

val buildType = buildType.name.replaceFirstChar { char -> char.uppercaseChar() }

tasks.named("generate${productFlavor}${buildType}Assets") {

dependsOn(tasks.named("cargoBuild"))

}

// The below dependsOn is needed till https://github.com/mozilla/rust-android-gradle/issues/85 is resolved this fix was got from #118

tasks.withType<CargoBuildTask>().forEach { buildTask ->

tasks.withType<MergeSourceSetFolders>().configureEach {

inputs.dir(

File(

File(layout.buildDirectory.asFile.get(), "rustJniLibs"),

buildTask.toolchain?.folder!!

)

)

dependsOn(buildTask)

}

}

}

}

// The below dependsOn is needed till https://github.com/mozilla/rust-android-gradle/issues/85 is resolved this fix was got from #118

tasks.matching { it.name.matches(Regex("merge.*JniLibFolders")) }.configureEach {

inputs.dir(File(layout.buildDirectory.asFile.get(), "rustJniLibs/android"))

dependsOn("cargoBuild")

}

You will need to set the ndkVersion to one you have installed, go to "Tools, SDK Manager, SDK Tools" and check "Show Package Details" to get your installed version, or to install the version matching build.gradle.kts above.

If you now build your project you should see the newly built shared library object file.

$ ls --tree Android/shared/build/rustJniLibs

Android/shared/build/rustJniLibs

└── android

└── arm64-v8a

└── libshared.so

└── armeabi-v7a

└── libshared.so

└── x86

└── libshared.so

└── x86_64

└── libshared.so

You should also see the generated types in the Android/generated

folder — note that the sourceSets directive in the shared library

gradle file (above) allows us to build our shared library against

these generated types.

$ ls --tree Android/generated

Android/generated

└── com

├── crux

│ └── examples

│ └── simplecounter

│ ├── Requests.kt

│ ├── shared.kt

│ └── Simplecounter.kt

└── novi

├── bincode

│ ├── BincodeDeserializer.kt

│ └── BincodeSerializer.kt

└── serde

├── BinaryDeserializer.kt

├── BinarySerializer.kt

├── ...

└── Unsigned.kt

Create some UI and run in the Simulator

Wrap the core to support capabilities

First, let's add some boilerplate code to wrap our core and handle the

capabilities that we are using. For this example, we only need to support the

Render capability, which triggers a render of the UI.

Let's create a file "File, New, Kotlin Class/File, File" called Core.

This code that wraps the core only needs to be written once — it only grows when we need to support additional capabilities.

Edit Android/app/src/main/java/com/crux/examples/counter/Core.kt to look like

the following. This code sends our (UI-generated) events to the core, and

handles any effects that the core asks for. In this simple example, we aren't

calling any HTTP APIs or handling any side effects other than rendering the UI,

so we just handle this render effect by updating the published view model from

the core.

package com.crux.examples.counter

import androidx.compose.runtime.getValue

import androidx.compose.runtime.mutableStateOf

import androidx.compose.runtime.setValue

open class Core : androidx.lifecycle.ViewModel() {

private var core: CoreFfi = CoreFfi()

var view: ViewModel by mutableStateOf(

ViewModel.bincodeDeserialize(core.view())

)

private set

fun update(event: Event) {

val effects = core.update(event.bincodeSerialize())

val requests = Requests.bincodeDeserialize(effects).value

for (request in requests) {

processEffect(request)

}

}

private fun processEffect(request: Request) {

when (val effect = request.effect) {

is Effect.Render -> {

this.view = ViewModel.bincodeDeserialize(core.view())

}

}

}

}

That when statement, above, is where you would handle any other

effects that your core might ask for. For example, if your core needs

to make an HTTP request, you would handle that here.

Edit /Android/app/src/main/java/com/crux/examples/counter/MainActivity.kt to

look like the following:

package com.crux.examples.counter

import android.os.Bundle

import androidx.activity.ComponentActivity

import androidx.activity.compose.setContent

import androidx.compose.foundation.layout.Arrangement

import androidx.compose.foundation.layout.Column

import androidx.compose.foundation.layout.Row

import androidx.compose.foundation.layout.fillMaxSize

import androidx.compose.foundation.layout.padding

import androidx.compose.material3.Button

import androidx.compose.material3.ButtonDefaults

import androidx.compose.material3.MaterialTheme

import androidx.compose.material3.Surface

import androidx.compose.material3.Text

import androidx.compose.runtime.Composable

import androidx.compose.runtime.rememberCoroutineScope

import androidx.compose.ui.Alignment

import androidx.compose.ui.Modifier

import androidx.compose.ui.graphics.Color

import androidx.compose.ui.tooling.preview.Preview

import androidx.compose.ui.unit.dp

import androidx.lifecycle.viewmodel.compose.viewModel

import com.crux.examples.counter.ui.theme.CounterTheme

import kotlinx.coroutines.launch

class MainActivity : ComponentActivity() {

override fun onCreate(savedInstanceState: Bundle?) {

super.onCreate(savedInstanceState)

setContent {

CounterTheme {

Surface(

modifier = Modifier.fillMaxSize(),

color = MaterialTheme.colorScheme.background

) { View() }

}

}

}

}

@Composable

fun View(core: Core = viewModel()) {

val scope = rememberCoroutineScope()

Column(

horizontalAlignment = Alignment.CenterHorizontally,

verticalArrangement = Arrangement.Center,

modifier = Modifier.fillMaxSize().padding(10.dp),

) {

Text(text = core.view.count, modifier = Modifier.padding(10.dp))

Row(horizontalArrangement = Arrangement.spacedBy(10.dp)) {

Button(

onClick = { scope.launch { core.update(Event.RESET) } },

colors =

ButtonDefaults.buttonColors(

containerColor = MaterialTheme.colorScheme.error

)

) { Text(text = "Reset", color = Color.White) }

Button(

onClick = { scope.launch { core.update(Event.INCREMENT) } },

colors =

ButtonDefaults.buttonColors(

containerColor = MaterialTheme.colorScheme.primary

)

) { Text(text = "Increment", color = Color.White) }

Button(

onClick = { scope.launch { core.update(Event.DECREMENT) } },

colors =

ButtonDefaults.buttonColors(

containerColor = MaterialTheme.colorScheme.secondary

)

) { Text(text = "Decrement", color = Color.White) }

}

}

}

@Preview(showBackground = true)

@Composable

fun DefaultPreview() {

CounterTheme { View() }

}

Web — TypeScript and React (Next.js)

These are the steps to set up and run a simple TypeScript Web app that calls into a shared core.

This walk-through assumes you have already set up the

shared library and codegen as described in

Shared core and types.

Create a Next.js App

For this walk-through, we'll use the

pnpm package manager for no

reason other than we like it the most!

Let's create a simple Next.js app for TypeScript,

using pnpx (from pnpm). You can probably accept

the defaults.

pnpx create-next-app@latest

Compile our Rust shared library

When we build our app, we also want to compile the Rust core to WebAssembly so that it can be referenced from our code.

To do this, we'll use

wasm-pack,

which you can install like this:

# with homebrew

brew install wasm-pack

# or directly

curl https://rustwasm.github.io/wasm-pack/installer/init.sh -sSf | sh

Now that we have wasm-pack installed, we can build

our shared library to WebAssembly for the browser.

wasm-pack build \

--target web \

--out-dir ../web-nextjs/generated/pkg \

../shared \

--features wasm_bindgen

Generate the Shared Types

To generate the shared types for TypeScript, we use the codegen CLI we prepared earlier:

cargo run --package shared --bin codegen \

--features codegen,facet_typegen \

-- --language typescript \

--output-dir generated/types

Both the Wasm package and the generated types are

referenced as local dependencies in package.json:

{

"dependencies": {

"shared": "file:generated/pkg",

"shared_types": "file:generated/types"

}

}

Install the dependencies:

pnpm install

Create some UI

Counter example

A simple app that increments, decrements and resets a counter.

Wrap the core to handle effects

First, let's add some boilerplate code to wrap our core

and handle the effects that it produces. For this

example, we only need to support the Render effect,

which triggers a render of the UI.

This code that wraps the core only needs to be written once — it only grows when we need to support additional effects.

Edit src/app/core.ts to look like the following.

This code sends our (UI-generated) events to the core,

and handles any effects that the core asks for. In this

example, we aren't calling any HTTP APIs or handling

any side effects other than rendering the UI, so we

just handle this render effect by updating the

component's view hook with the core's ViewModel.

Notice that we have to serialize and deserialize the data that we pass between the core and the shell. This is because the core is running in a separate WebAssembly instance, and so we can't just pass the data directly.

import type { Dispatch, SetStateAction } from "react";

import { CoreFFI } from "shared";

import type { Effect, Event } from "shared_types/app";

import { EffectVariantRender, Request, ViewModel } from "shared_types/app";

import { BincodeDeserializer, BincodeSerializer } from "shared_types/bincode";

import init_core from "shared/shared";

export class Core {

core: CoreFFI | null = null;

initializing: Promise<void> | null = null;

setState: Dispatch<SetStateAction<ViewModel>>;

constructor(setState: Dispatch<SetStateAction<ViewModel>>) {

// Don't initialize CoreFFI here - wait for WASM to be loaded

this.setState = setState;

}

initialize(shouldLoad: boolean): Promise<void> {

if (this.core) {

return Promise.resolve();

}

if (!this.initializing) {

const load = shouldLoad ? init_core() : Promise.resolve();

this.initializing = load

.then(() => {

this.core = new CoreFFI();

this.setState(this.view());

})

.catch((error) => {

this.initializing = null;

console.error("Failed to initialize wasm core:", error);

});

}

return this.initializing;

}

view(): ViewModel {

if (!this.core) {

throw new Error("Core not initialized. Call initialize() first.");

}

return deserializeView(this.core.view());

}

update(event: Event) {

if (!this.core) {

throw new Error("Core not initialized. Call initialize() first.");

}

const serializer = new BincodeSerializer();

event.serialize(serializer);

const effects = this.core.update(serializer.getBytes());

const requests = deserializeRequests(effects);

for (const { effect } of requests) {

this.processEffect(effect);

}

}

private processEffect(effect: Effect) {

switch (effect.constructor) {

case EffectVariantRender: {

this.setState(this.view());

break;

}

}

}

}

function deserializeRequests(bytes: Uint8Array): Request[] {

const deserializer = new BincodeDeserializer(bytes);

const len = deserializer.deserializeLen();

const requests: Request[] = [];

for (let i = 0; i < len; i++) {

const request = Request.deserialize(deserializer);

requests.push(request);

}

return requests;

}

function deserializeView(bytes: Uint8Array): ViewModel {

return ViewModel.deserialize(new BincodeDeserializer(bytes));

}

That switch statement, above, is where you would

handle any other effects that your core might ask for.

For example, if your core needs to make an HTTP

request, you would handle that here. To see an example

of this, take a look at the

counter example

in the Crux repository.

Create a component to render the UI

Edit src/app/page.tsx to look like the following.

This code loads the WebAssembly core and sends it an

initial event. Notice that we pass the setState hook

to the update function so that we can update the state

in response to a render effect from the core.

"use client";

import type { NextPage } from "next";

import { useEffect, useRef, useState } from "react";

import {

ViewModel,

EventVariantReset,

EventVariantIncrement,

EventVariantDecrement,

} from "shared_types/app";

import { Core } from "./core";

const Home: NextPage = () => {

const [view, setView] = useState(new ViewModel(""));

const core = useRef(new Core(setView));

useEffect(() => {

void core.current.initialize(true);

}, []);

return (

<main>

<section className="box container has-text-centered m-5">

<p className="is-size-5">{view.count}</p>

<div className="buttons section is-centered">

<button

className="button is-primary is-danger"

onClick={() => core.current.update(new EventVariantReset())}

>

{"Reset"}

</button>

<button

className="button is-primary is-success"

onClick={() => core.current.update(new EventVariantIncrement())}

>

{"Increment"}

</button>

<button

className="button is-primary is-warning"

onClick={() => core.current.update(new EventVariantDecrement())}

>

{"Decrement"}

</button>

</div>

</section>

</main>

);

};

export default Home;

Now all we need is some CSS. First add the Bulma

package, and then import it in layout.tsx.

pnpm add bulma

import "bulma/css/bulma.min.css";

import type { Metadata } from "next";

import { Inter } from "next/font/google";

const inter = Inter({ subsets: ["latin"] });

export const metadata: Metadata = {

title: "Crux Simple Counter Example",

description: "Rust Core, TypeScript Shell (NextJS)",

};

export default function RootLayout({

children,

}: {

children: React.ReactNode;

}) {

return (

<html lang="en">

<body className={inter.className}>{children}</body>

</html>

);

}

Build and serve our app

We can build our app, and serve it for the browser, in one simple step.

pnpm dev

Web — Rust and Leptos

These are the steps to set up and run a simple Rust Web app that calls into a shared core.

This walk-through assumes you have already set up the